A small shift in how I’m starting to think about AI systems

My boss shared a formerly known as twitter post from Ramp about how they structure their systems around signals and monitoring, using tools like Datadog. Nothing particularly flashy, just a clear emphasis on systems constantly emitting signals, and those signals driving what gets attention. They’re not framing this as an AI or “agent” system. It’s just a really clean signal-driven monitoring setup.

I tend to learn by building. I need to get my hands dirty to really understand what’s going on. What’s changed with AI is that you can now build something small and immediately observe how it behaves.

So I took the pattern Ramp described and tried to recreate it, mostly to see how far it could go.

Most of what I’ve built with AI has been centered around getting models to do things. Generate code, complete tasks, orchestrate workflows. That framing still works, but it assumes you already know what needs to happen.

In reality, most systems don’t fail loudly. They get stuck in ways that are easy to miss unless you’re actively looking.

That’s where my thinking started to diverge.

What Ramp’s approach made me think about is treating systems as something that’s always talking through signals and asking what it would look like if AI was focused on interpreting that stream, not just acting on commands.

What Ramp’s approach made me think about is treating systems as something that’s always talking through signals…

So I built a small model to explore that idea.

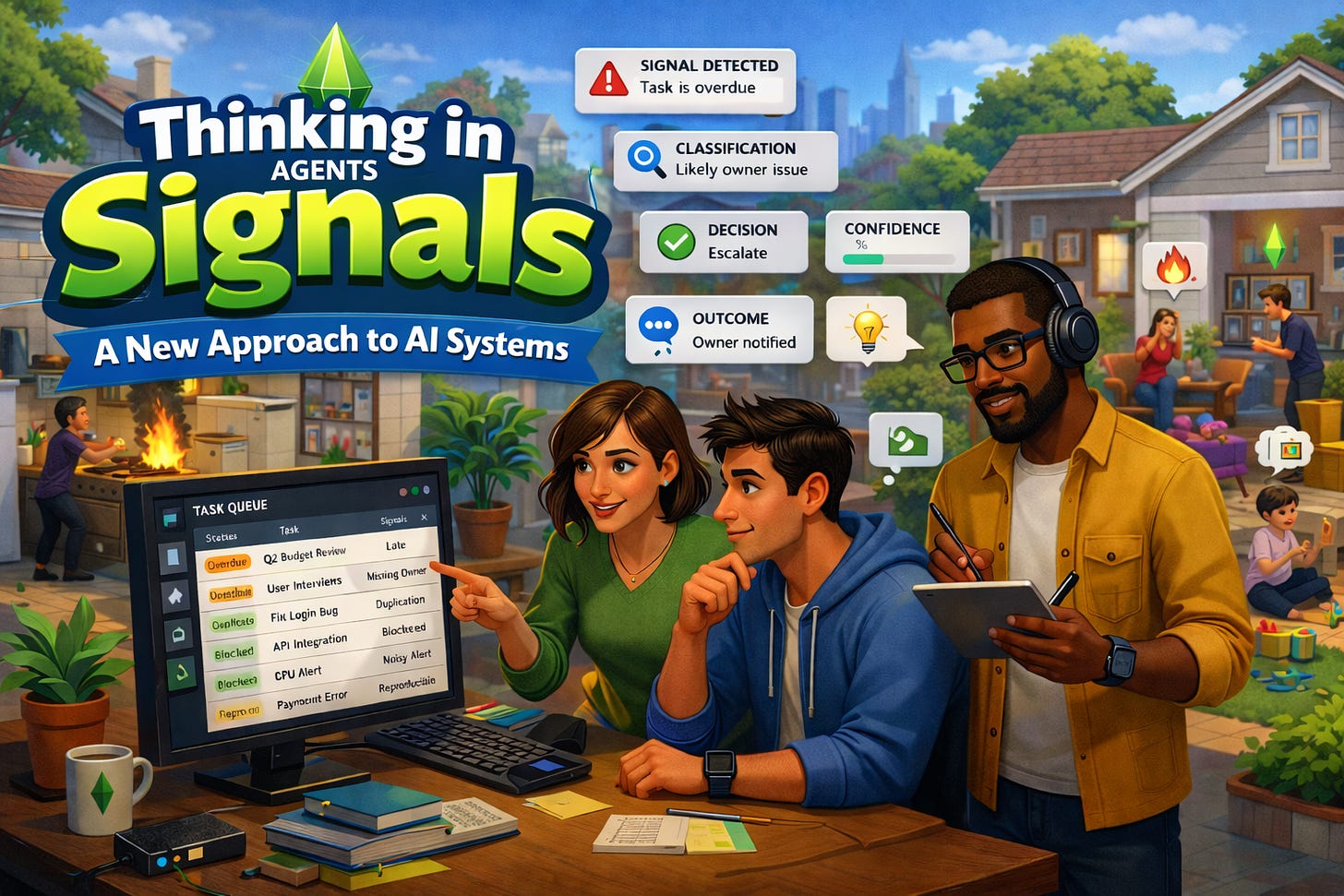

It’s an interactive demo of a self-triaging to-do system. At a high level, it simulates a work queue that watches tasks for operational problems like overdue work, missing owners, duplicate effort, noisy alerts, reproducible issues. As the system runs, it detects signals, classifies what matters, decides whether something should be watched or escalated, and records the outcome.

What you end up with isn’t just a task list. It’s a running narrative of what the system is noticing and how it’s responding.

In a more concrete sense, it’s a to-do system that doesn’t just store tasks, but continuously inspects them, detects operational drift, and explains the decisions it makes about what needs attention.

The goal wasn’t to build a better task manager. It was to make the idea of a “self-maintaining workflow” legible so it shows how something closer to incident triage could apply to everyday work.

That ended up feeling more useful than I expected.

It shifts the role of AI a bit. Instead of being something you call to perform a task, it becomes something that’s continuously forming an understanding of what’s going on, and only stepping in when that understanding crosses a threshold.

It shifts the role of AI a bit. Instead of being something you call to perform a task, it becomes something that’s continuously forming an understanding of what’s going on, and only stepping in when that understanding crosses a threshold.

I don’t think this replaces the “agents that do work” model. But it complements it in a way that feels important. Before anything can act effectively, something has to notice that action is needed in the first place.

This was a small project, mostly to make the idea concrete for myself. But it changed how I think about building with AI. Less focus on telling systems what to do, more focus on helping them recognize when something is off.

It feels like a subtle shift, but it opens up a lot of possibilities.

Originally published on Substack.

I write about how systems influence behavior, often in subtle ways. Not to explain everything, but to slow things down enough to see what’s usually missed. The aim is to help build better mental models. If this resonated, you can support my writing with a coffee.